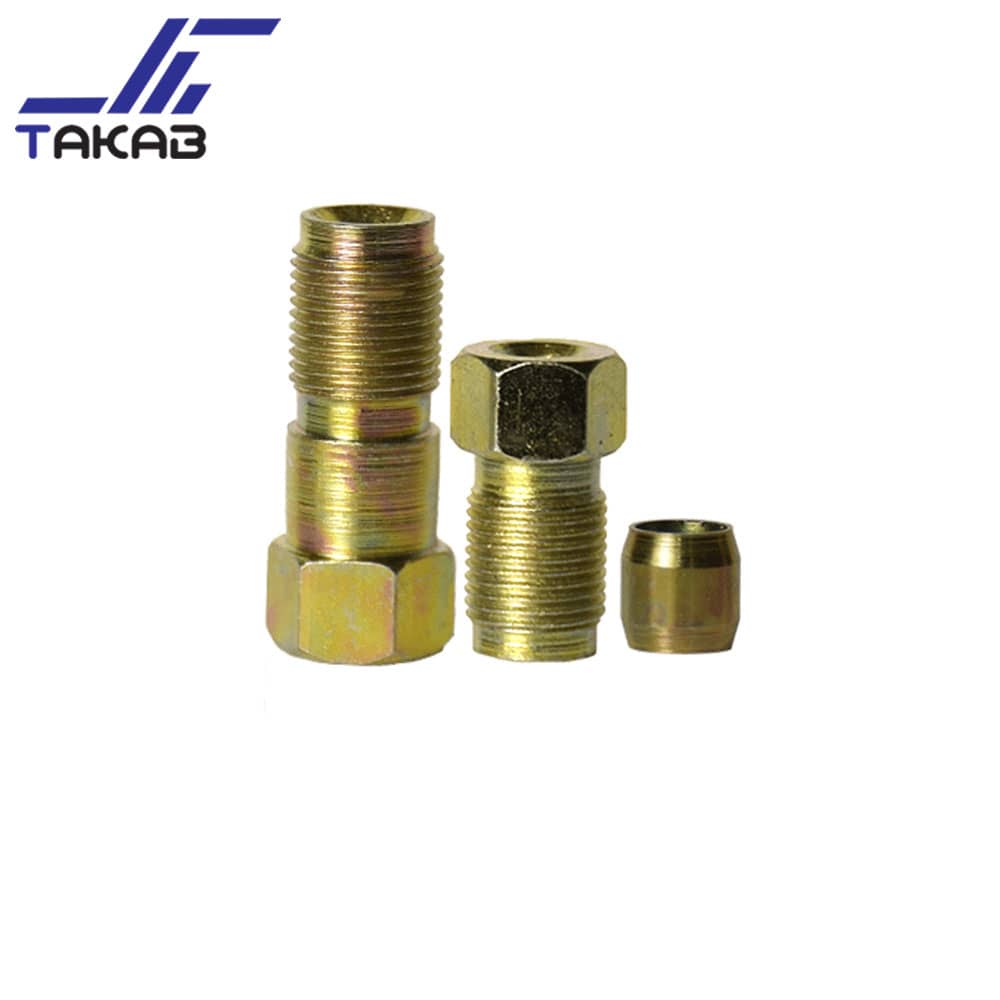

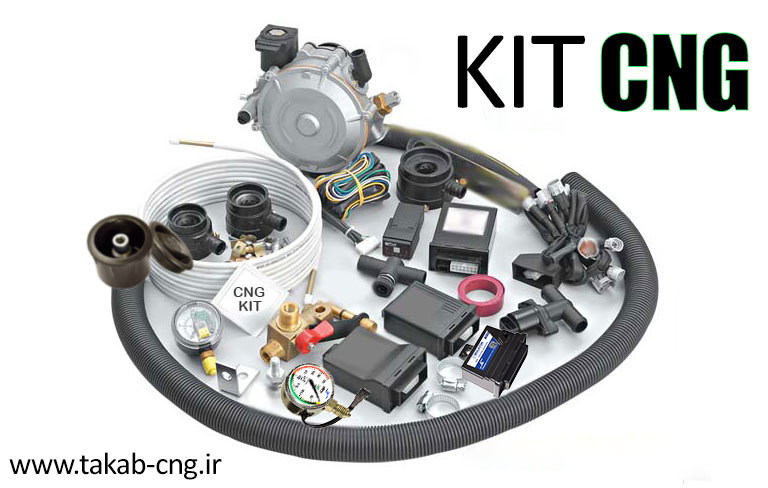

برخی از محصولات

کیفیت در تولیدات ما حرف اول را میزند

چرا تکاب CNG ؟

شرکت امید بهسازان بعنوان یکی از شرکتهای پیشرو درتولید و ساخت محصولات و قطعات صنعتی ، سیستم مدیریت کیفیت خود را براساس استاندارد ISO 9001:2008 قرار داده و به منظور حفظ و ارتقاء جایگاه خود در این صنعت و همچنین گسترش بازار و حفظ توان رقابتی خود با محصولات مشابه رقبا دربازارهای داخلی، خارجی برقرار نموده ودر راستای نیل به اهداف کیفی و کمی، خط مشی خود را به شرح زیر ترسیم نموده است

گارانتی محصولات

به دلیل بالا بودن کیفیت محصولات و اطمینان از سطح دانش فنی همه ی محصولات را شامل ۶ ماه گارانتی پس از فروش می کند.

بالاترین استانداردها

سیستم مدیریت کیفیت محصولات خود را براساس استاندارد ISO 90001, 2008 و همواره در حال ارتقاء کیفیت محصولات و قطعات تولیدی با استفاده از روش ها و تکنولوژی های جدید سازمان میباشد.

تیم حرفه ای

شرکت تکاب CNG توانسته است با به کارگیری افراد متخصص و مجرب در حوزه های مختلف صنعتی ،فنی و مهندسی ، واحد کنترل کیفیت و واحد تحقیق و توسعه یکی از تولید کنندگان فعال در حوزه cngکشور شود.

افتخارات ما

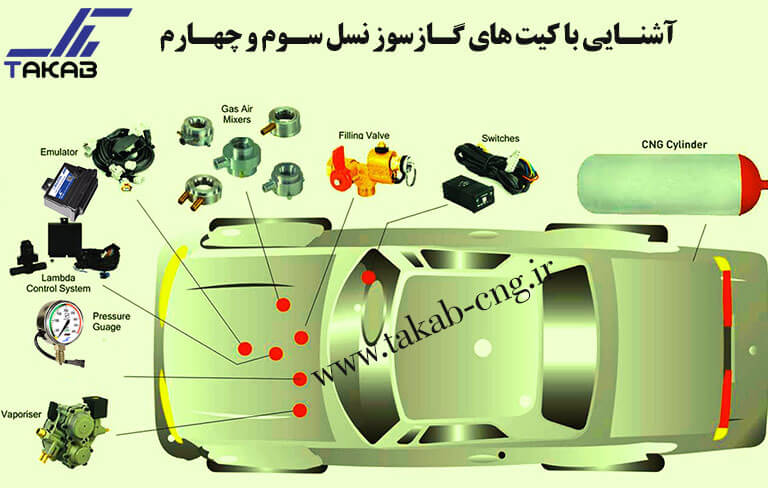

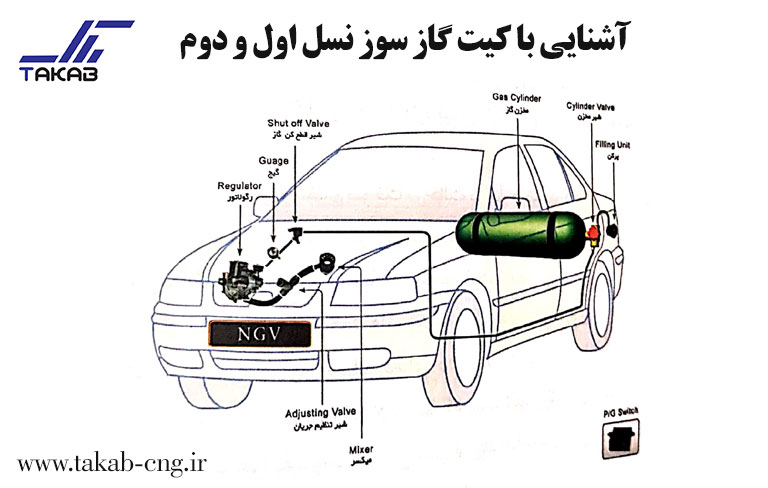

شرکت تکاب در مسیر حرکت خود تا امروز مفتخر به ثبت اختراعاتی از جمله تولید سنسورهای فشار، سنسورهای lpg، شیر پرکنهای گالوانیزه و الکترولس، بست روکش دار فولادی و میکسرهای آلومینیومی و… بوده است.